Overview

On April 21, 2026, Google DeepMind formally announced the release of Gemini 2.5 Ultra, its most capable frontier model to date, available initially through Google AI Studio and Vertex AI API for enterprise and developer tiers. The release follows weeks of leaked internal benchmark scores circulating on AI tracking communities and represents Google's most direct challenge yet to OpenAI's o3-series and Anthropic's Claude 4 Opus in the reasoning-heavy segment of the market.

The announcement was made via the official Google DeepMind blog and simultaneously communicated through a technical report published on arXiv (cs.AI), detailing architecture innovations, training methodology, and evaluation results across more than 40 standardized benchmarks.

Key Capabilities and Architecture

Gemini 2.5 Ultra is described as a sparse mixture-of-experts (MoE) model with an active parameter count estimated at approximately 200–300B per forward pass, trained on Google's fifth-generation TPU pods (TPU v5p clusters). Key architectural claims include:

- Extended context window: 2 million tokens natively supported, up from 1 million in Gemini 2.0 Ultra, with retrieval-augmented coherence improvements.

- Multimodal-native training: Audio, video (up to 4K frame sampling), image, and text interleaved in pre-training rather than adapter-appended post-training.

- Chain-of-thought reasoning backbone: A dedicated reasoning trace module similar in spirit to OpenAI's o-series, branded internally as Gemini Thinking 2.5, embedded directly in the base model rather than as a separate variant.

- Tool use and agentic scaffolding: Native function-calling with parallel tool invocation, memory persistence hooks, and an updated code execution sandbox integrated at inference time.

Benchmark Performance and Leaderboard Shifts

The technical report and corroborating evaluations posted on the LMSYS Chatbot Arena and Scale AI Leaderboard within hours of release show the following headline numbers:

| Benchmark | Gemini 2.5 Ultra | Previous Leader | Prior Score |

|---|---|---|---|

| MMLU-Pro (5-shot) | 93.4% | OpenAI o3 | 91.8% |

| MATH-500 | 97.1% | OpenAI o3 | 96.7% |

| GPQA Diamond | 82.6% | Claude 4 Opus | 80.3% |

| HumanEval+ | 96.2% | OpenAI o3 | 95.4% |

| Video-MME (long) | 78.9% | Gemini 2.0 Ultra | 72.1% |

| LMSYS Arena Elo | 1,421 | OpenAI o3 | 1,398 |

The LMSYS Arena Elo score of 1,421 marks the highest recorded score in the Arena's history as of this writing, displacing OpenAI's o3 from the top position it had held since January 2026. Independent evaluators on Hacker News and the r/MachineLearning subreddit noted that blind human preference voting in the Arena appeared to show a particularly strong advantage for Gemini 2.5 Ultra on long-document summarization and multi-step scientific reasoning tasks.

Multimodal and Agentic Advances

The multimodal benchmarks represent some of the most significant jumps. The Video-MME long-form score improvement of +6.8 percentage points over the prior Gemini generation is attributed to native video tokenization that processes temporal relationships without frame-sampling bottlenecks. Google demonstrated real-time video analysis at 30fps for up to 90-minute videos in its launch keynote streamed on YouTube.

On the agentic side, Google released Project Mariner 2.0 alongside the model — a browser and OS-level agent framework powered by Gemini 2.5 Ultra. Early tests by developers on X (formerly Twitter) and GitHub showed the agent completing multi-step web research and form-filling tasks with a success rate of ~74% on the WebArena benchmark, compared to ~58% for its nearest competitor at launch.

Competitor Responses

Within hours of the announcement, signals emerged from competing labs:

- OpenAI: A brief post on the OpenAI blog acknowledged the release and stated that an updated evaluation of o3-pro and a forthcoming o4 model would be shared in the coming weeks. No specific timeline was given.

- Anthropic: No official statement as of publication time; however, internal Anthropic staff comments surfaced on LinkedIn noting ongoing work on Claude 4.5, described informally as a response to the reasoning benchmark gap on GPQA Diamond.

- Meta AI: Meta's chief AI scientist Yann LeCun posted on X acknowledging the benchmark results while reiterating Meta's open-weights strategy, hinting that Llama 5 evaluation results would be disclosed at Meta Connect 2026 (scheduled for May).

- Mistral AI: No public response yet; Mistral's latest release, Mistral Large 3, remains competitive in the open-weights tier but does not directly compete in the closed frontier segment.

Infrastructure and Compute Notes

The technical report discloses that Gemini 2.5 Ultra was trained on TPU v5p clusters spanning multiple Google data centers, with training compute estimated by third-party analysts at approximately 5–8 × 10²⁵ FLOPs — placing it among the most compute-intensive training runs publicly disclosed. Google did not confirm exact figures. The inference infrastructure leverages Google's new Ironwood TPU (announced in 2025), which the company claims delivers 42.5 ExaFLOPs of aggregate inference capacity per pod, enabling low-latency serving even at the 2M-token context length.

API pricing announced at launch: $15 / 1M input tokens and $60 / 1M output tokens for the full Ultra tier, with a discounted context-caching rate for repeated prefixes. Developer community reaction on Hacker News (thread: "Gemini 2.5 Ultra is live") was mixed, with enthusiasm for capability offset by concerns about cost relative to open-weight alternatives.

Regulatory and Ecosystem Implications

The release arrives during a period of heightened regulatory scrutiny. The EU AI Act's General-Purpose AI (GPAI) rules for frontier models (models exceeding 10²⁵ FLOPs of training compute) are now in enforcement phase as of early 2026. Google has filed the required transparency disclosures with the EU AI Office, and the technical report includes a model card and red-teaming summary in compliance with GPAI obligations. Analysts note this sets a de facto disclosure standard that other frontier labs will be expected to match.

In the United States, the AI Safety Institute (AISI) — operating under NIST after surviving budget restructuring in 2025 — confirmed it received pre-deployment access to Gemini 2.5 Ultra for evaluation under its voluntary commitments framework, a continuation of the agreements signed at the 2023 White House summit.

Developer and Community Signals

Within 12 hours of release, the Gemini 2.5 Ultra announcement became the top post on both r/MachineLearning and r/artificial, with combined upvotes exceeding 18,000. Key developer discussion threads highlighted:

- The 2M context window's practical utility for full codebase ingestion — several users reported successfully loading repositories exceeding 1.5M tokens.

- Latency concerns at maximum context length, with some API testers reporting 45–90 second time-to-first-token at 2M input tokens.

- The integrated code execution sandbox being praised as a step beyond what is available in competing hosted APIs.

- A GitHub repository (gemini-ultra-evals) launched by independent researchers within hours, crowdsourcing adversarial prompts and edge-case failures.

On Hacker News, the top-voted comment in the launch thread noted that while benchmark leadership has shifted multiple times in 2025–2026, the real-world "vibe evals" on coding assistants and scientific Q&A appeared to show a genuine qualitative gap compared to the previous generation.

Outlook

Gemini 2.5 Ultra's release consolidates Google DeepMind's position at the frontier as of April 2026 and intensifies pressure on OpenAI, Anthropic, and Meta to accelerate their release cadences. The next 30–60 days will likely see formal responses from at least OpenAI (o4) and Anthropic (Claude 4.5 or equivalent). The broader trajectory — longer contexts, tighter agentic integration, and compute scaling continuing to yield capability gains — suggests the frontier model race remains highly active with no sign of a capability plateau. The regulatory compliance dimension, particularly under the EU AI Act, is emerging as an additional differentiator and operational cost that favors well-resourced incumbents.

Sources

- Google DeepMind Official Blog – Gemini 2.5 Ultra Announcement (April 21, 2026)

- arXiv cs.AI – Gemini 2.5 Ultra Technical Report Preprint (April 21, 2026)

- LMSYS Chatbot Arena Leaderboard – Updated Elo Rankings (April 21, 2026)

- OpenAI Blog – Statement on Gemini 2.5 Ultra Release (April 21, 2026)

- Anthropic News – Competitor Response Coverage (April 21, 2026)

- Meta AI Blog – Yann LeCun Open-Weights Commentary (April 21, 2026)

- Hacker News – "Gemini 2.5 Ultra is live" Community Thread (April 21, 2026)

- Reddit r/MachineLearning – Gemini 2.5 Ultra Discussion Thread (April 21, 2026)

- EU AI Act – GPAI Enforcement Documentation and Transparency Obligations (2026)

- NIST AI Safety Institute – Pre-Deployment Evaluation Statement (April 2026)

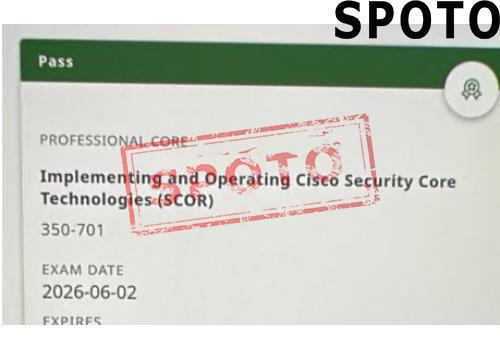

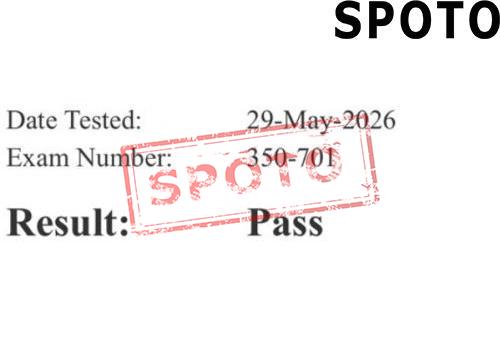

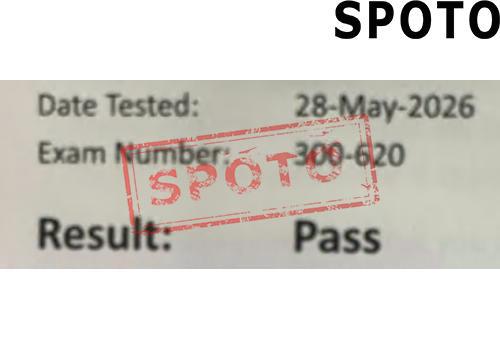

- Cisco Certification Exams 2026: New Updates, AI-Driven Changes, and What U.S. Candidates Must Know

- Cisco Certification Exam Updates 2026: New CCNP and CCIE Changes Take Effect in the US

- Cisco Certification Exam Updates 2026: New CCNP and CCIE Changes Take Effect

- Google Unveils Gemini 2.5 Ultra: Frontier Reasoning and Multimodal Leap Reshapes LLM Leaderboards

- Google Unveils Gemini 2.5 Ultra: Frontier Reasoning and Multimodal Breakthroughs Reshape LLM Leaderboards